This article originally appeared on DevOps.com

I arrived early to a meeting, and the pleasant security guard at AT&T suggested I take a look at their innovation museum while I wait. Among motion picture sound technology and life-size photos of switchboard operators on roller-skates stood a transistor prototype. The transistor was invented in Bell labs in 1947, and it changed the world. Steve Wozniak considered it the greatest innovation of all time in his 2014 article.

But almost nobody needs to think about transistors anymore. The transistor had to become transparent and abstracted, so the next generations can build great things in higher level. The transistor made a huge influence on our lives as an enabler, but if people had to keep talking about transistors it wouldn’t be possible.

Abstracting technologies is key to advancing to a higher level, making it possible to solve real business problems.

The language of a client-focused business is applications. If we want to make a business execute faster these days, we need to make the development and release of its applications execute faster. But one thing stands in our way: the infrastructure. Applications reside on infrastructure–they are installed on servers, bundled in containers, on cloud instances, in networks, using storage.

If we want to speak the language of applications, we need to abstract them from the infrastructure, and make the infrastructure transparent.

The concept of abstracting applications is what stands at the basis of every new approach to application development and release around us.

- Containers are all about abstracting services from specific infrastructure and making them mobile.

- Serverless takes it to the extreme.

- Public cloud makes it easier not to worry about owning physical infrastructure.

Technology screams, “Think applications, soon you will not have to worry about infrastructure!”

And yet, most of us mix applications and infrastructure in an unhealthy way when we try to automate them. It is unhealthy because mixing applications and infrastructure prevents reuse of automation assets and makes it very difficult to connect infrastructure usage to a business context.

Understanding how mixing applications and infrastructure is manifested requires looking back into the evolution of infrastructure and application automation.

The Evolution of Infrastructure: Manual Deployment and the Inception of Its Automation

Behind any automation tool or standard stands a model. This model reflects how its creators originally perceived the problem they were trying to solve. It consists of the entities they defined as first-class citizens, the operations they wanted to apply on these entities, their attributes.

“All models are wrong, some are useful,” said George E. P. Box in 1976. This is true for automation models too. Automation models are usually nice and clean when we start off. But as the problems we try to solve transform, our models need to grow and adapt. Until at some point they are not useful anymore and we need to replace them with next generation modelling approaches.

The Configuration Management Model

Configuration management tools such as Puppet and Chef were originally built to manage application deployment and configuration on static infrastructure. This new approach to Ops optimized manually updating applications on servers. Better network connectivity made it possible for this model to thrive, with the ability to configure applications on servers remotely.

But within a decade we witnessed the fast rise of immutable infrastructure. Immutable infrastructure is an approach to deploying applications where the infrastructure components are replaced instead of updated. This approach somewhat pulled the rug from under the configuration management model. With each update or change, the application must be re-deployed. This forced adding dynamic infrastructure provisioning to the configuration management model. Combining infrastructure automation and immutable infrastructure concepts into the configuration management model is a difficult task, since this model was never designed to accommodate something like that.

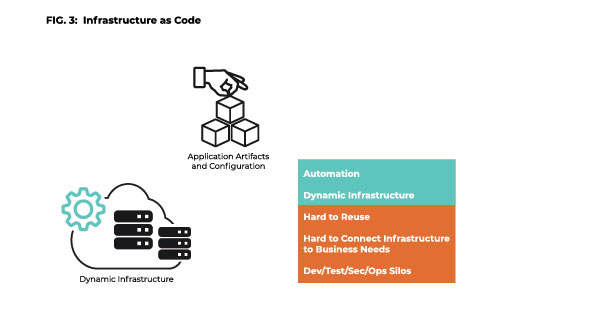

The Infrastructure-as-Code Model

Pure Infrastructure-as-Code tools were built to provision infrastructure dynamically. Terraform is an example for an open source tool that does this well. This model is created with infrastructure in mind.

Whether we look into Terraform, AWS, CloudFormation or ARM templates, we will see the infrastructure is the starting point (defining network connectivity, VM instances and services) and the applications are an implicit addition. They are artifacts that happen to be deployed on and are tightly coupled to the infrastructure. We may look at a component that says “App A” but it actually stands for “specific infrastructure with app A installed on it.”

This seems natural, because it’s the same way it worked in the good old physical-infrastructure world: We fetch a box, we install something on it. We focus on the infrastructure, because this is our starting point. The application is the artifacts we put on the infrastructure and scripts we run after we provision it. Presumably, the application cannot live without something to be on.

Infrastructure-as-Code tools evolved the way open tools do–their base model was extended by the community because there was a need to automate applications deployment and not just infrastructure. But it is becoming difficult to extend this model to reflect a world where applications are built to move between clustered servers, and the starting point is no longer the infrastructure, but rather the application’s requirements.

Mixing Infrastructure and Applications

Trying to fit infrastructure provisioning and application deployment in a single model that was originally designed for just one of them creates issues. Infrastructure is lower level than applications. Applications reside on the infrastructure. The same app can reside on different infrastructure configurations. This critical relationship is completely flattened if you mix them.

Moreover, from a business perspective it is important to make applications explicit. It’s our product. Our website. Our mobile app. The applications need to be the center of the attention and discussion. This kind of modelling puts applications in the shade of infrastructure.

Four Great Reasons to Model Applications as a First-Class Citizen Right Now

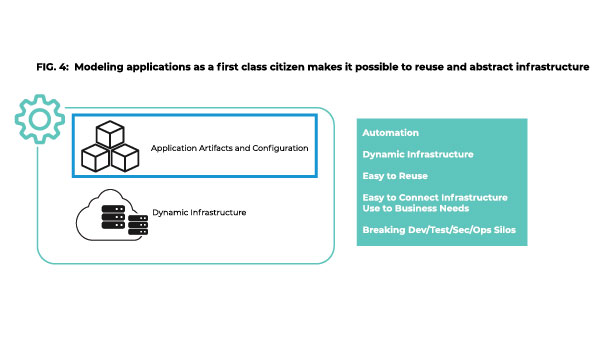

The models we use today are broken, and it is time to evolve. No matter if your applications reside in containers, or on cloud instances, or include native cloud services or a mix of them. Applications should be a first-class citizen, and not some artifact installed as an afterthought on the infrastructure we started from.

The notion that applications should be abstracted from infrastructure doesn’t eliminate all the challenges of infrastructure provisioning and application deployment, but it creates a foundation for better and more scalable automation.

1. Achieve Application Re-Use

Modelling applications as a first-class citizen is the only way to make applications a re-usable element throughout the development and release pipeline.

If there’s one thing I learned in my automation journey, it is that if automation doesn’t get frequently and consistently reused, you don’t get value for your money.

2. The Path to Multi-Cloud

Abstracting application is also the best approach for moving application workloads between cloud providers. If everything starts from infrastructure, we need to start from scratch when we move. If our applications are abstracted and defined separately—this definition stays the same when we move, only the infrastructure changes.

3. The Path to Breaking Silos

The vision of Dev, Sec, and Ops all dealing with the same language (code) is wonderful. But bringing everyone to the same code pool is not always easy. Applications and infrastructure are not the same thing, even though we can code both. Mixing them makes them unnecessarily hard to build and troubleshoot. It forces us to deal with fuzzy boundaries when it comes to responsibility for this code. Basing automation on a model that organizes the world in a way the different stakeholders can understand it, can help overcome this barrier.

4. Connect Infrastructure to Business Needs

Infrastructure-as-Code is a huge breakthrough, but the bigger story is about making application development and release faster. This doesn’t undermine the importance of infrastructure; infrastructure is the foundation of everything. But if we want to solve business problems, we need to talk the language of the business, and that’s the language of applications. Our automation should be built in a way that reflects this.